‘How will we interact with AI in the future?’ asks Clare Rainey, a lecturer in diagnostic radiography at Ulster University. ‘We need to know how to be ready for a digital future in a safe, responsible and informed way.’

Clare was awarded £10,000 last August through the College of Radiographers’ Industry Partnership Scheme (CoRIPS) to research how AI influences radiographers’ reporting decisions. While AI has the potential to open up many opportunities in radiography and healthcare, caution is required to ensure it works for radiographers and, in turn, for patients.

‘A lot of my focus is on how humans do interact or will interact with artificial intelligence, and what are the best ways in which humans will interact with it,’ says Clare.

Demand for radiography services is increasing and will continue to increase as highlighted in the Richards report for NHS England last year – and increasing demand drives the adoption of new technologies and equipment.

‘We need to be aware of the potential opportunities and pitfalls of AI and the implementation phase of the NHS Long Term Plan, which states that we will be leaders in machine learning in the UK in the near future,’ Clare adds.

‘The implementation phase of some of these technologies is coming. We need to know now how to approach any AI implementation critically and realistically. For radiographers of the future, I strongly believe that this is going to be intrinsic to our radiography training and in our radiography practice because we are going to be living and working alongside AI more and more.’

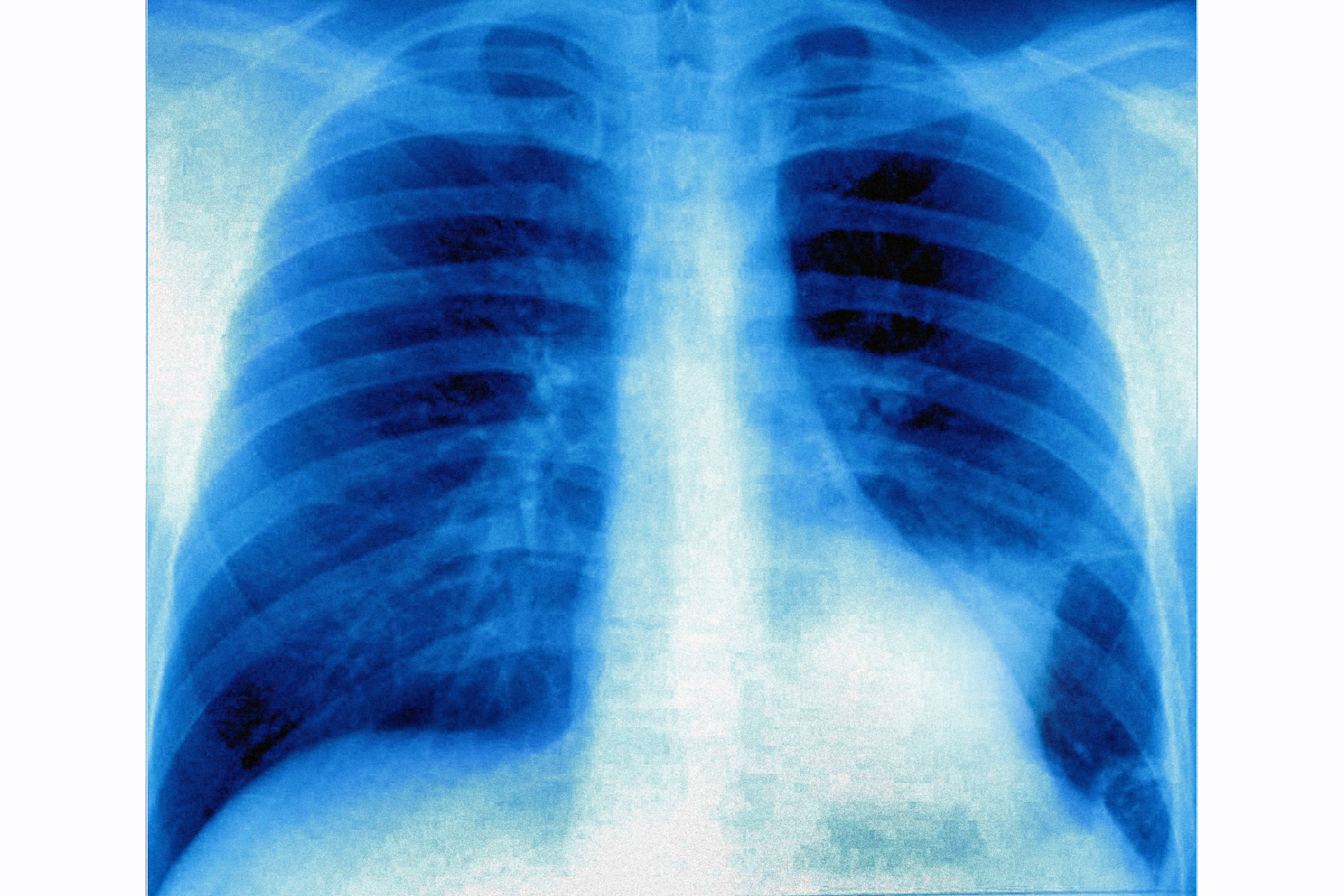

Clare’s study will involve asking radiographers to review an X-ray and decide whether they agree with a diagnosis provided via an AI algorithm built specifically for the project.

Two important features of her research are binary feedback and decision switching. Clare explains: ‘Binary feedback means the AI will tell you “fracture or no fracture”. Decision switching is if the AI makes someone change their mind.

‘For example, I’m presenting a radiograph of a wrist and the radiographer interpreting the image says there is no fracture on the image. But then the AI produces a heat map – or a decision or a percentage confidence or any other type of AI feedback based on that radiograph – and says that there is a fracture on that image. Does that then cause the user to change their mind about their initial decision or, if it doesn’t, does it make them unsure of their initial decision as well?’

By understanding decision switching, Clare hopes that radiographers can use AI to provide ‘optimum value in terms of accuracy and patient interaction’. In other words, rather than relying on AI to provide accuracy and patient interaction, AI would be engineered to work with radiographers to provide the optimum level of accuracy and to improve interaction with patients.

Another way to look at is this is through ‘automation bias’, which refers to how reliant we are on machines. There could be a problem if users place too much trust in AI, so understanding the influence of decision switching on radiographers’ reporting of X-rays is also about developing an appropriate level of trust in AI.

While Clare is cautious about the developments coming with AI, she is also optimistic. ‘The 21st century skills that radiographers and radiologists have are not replaceable by machines,’ she says. Rather, she hopes ‘it will help to free up our time in those important avenues rather than spending time on mundane tasks.’

Indeed, Clare expects radiographers to adopt AI in the same way that the profession adopted PACS: ‘As radiographers we’re good at adopting technology and we’re good at understanding it.’

You can take part in Clare’s study ‘An observational study to investigate the impact of AI feedback on image comment, decision switching and trust perception in student and experienced radiographers’.